The Black Box Problem of AI Agents

AI agents are no longer simple chatbots. Modern agent systems run multi-step reasoning loops, call external tools, make autonomous decisions, and chain multiple LLM calls together before delivering a result. They browse the web, write code, query databases, and orchestrate other agents.

But here is the uncomfortable truth: most builders have no idea what is happening inside their agent while it runs. When an agent fails, hallucinates, or burns through tokens without producing results, you are left staring at the final output with no visibility into the dozens of intermediate steps that led there.

This is the agent observability problem. And in 2025/2026, a new category of tools has emerged to solve it.

What Agent Observability Actually Means

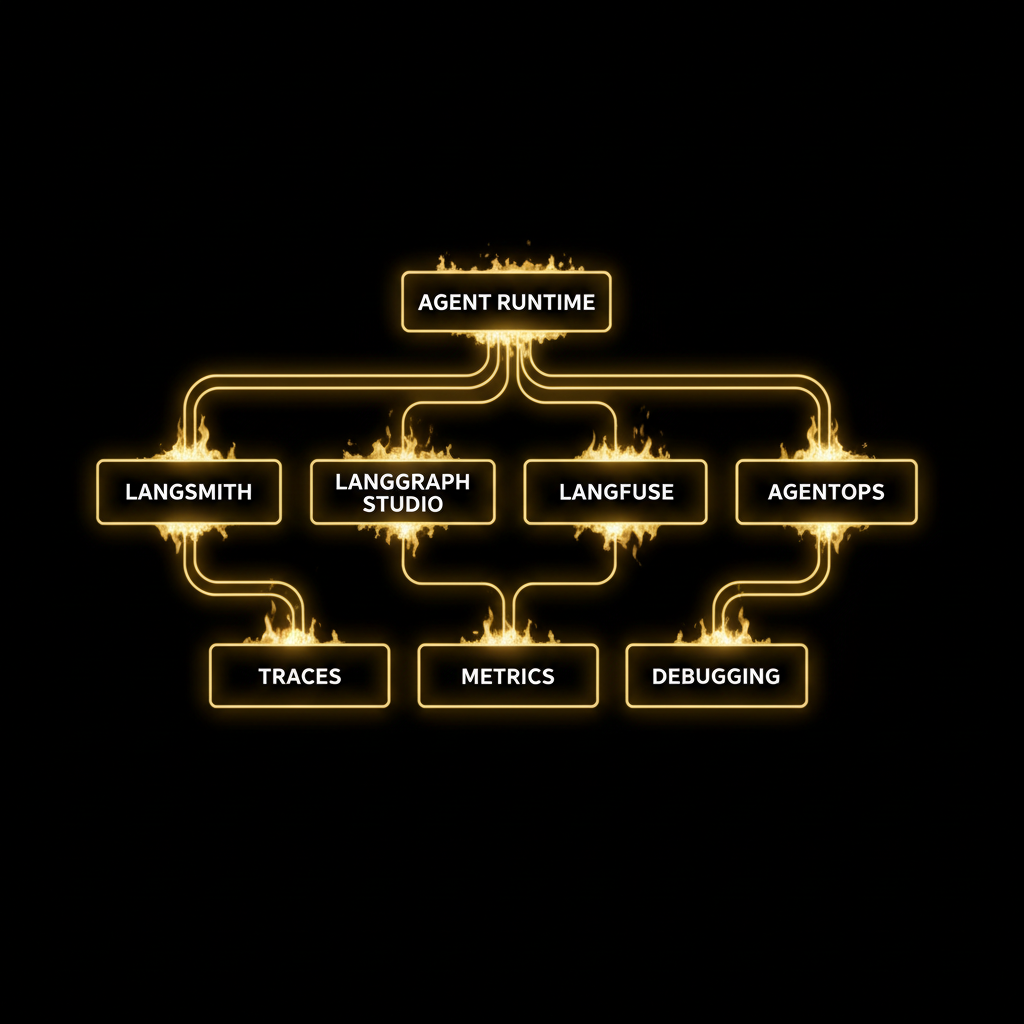

Traditional software observability tracks logs, metrics, and traces. Agent observability borrows these concepts but applies them to a fundamentally different runtime:

- Traces capture the full execution path of a request: every LLM call, every tool invocation, every decision point, every retry, nested inside each other like Russian dolls.

- Metrics track token usage, latency per step, cost per request, error rates, and model performance over time.

- Debugging means being able to step through an agent's reasoning chain after the fact, understanding why it chose tool A over tool B, and where the context window started degrading.

The key difference to traditional APM: agent behavior is non-deterministic. The same input can produce wildly different execution paths. This makes observability not just useful but essential.

The Current Landscape

LangSmith — The Integrated Platform

LangSmith, built by the team behind LangChain, is the most mature agent observability platform available today. It captures end-to-end traces with a waterfall view that reveals the sequence and timing of every component in your agent chain. Custom dashboards track token usage, latency percentiles (P50, P99), error rates, cost breakdowns, and user feedback scores.

If you are already in the LangChain ecosystem, integration is a single environment variable. The platform shines at tracing complex multi-step workflows, especially when combined with LangGraph for graph-based agent orchestration.

LangGraph Studio — The Visual Debugger

LangGraph Studio takes a different approach: it is not just an observability tool but a full agent IDE. You can see your agent's execution graph visualized in real-time, watch nodes light up as they execute, inspect intermediate states, and even pause the agent mid-execution to step through it manually.

The killer feature is time-travel debugging: you can rewind to any point in the agent's execution, modify the state, and replay from there. Think of it as breakpoints for agent reasoning. Studio v2 added the ability to download production traces and replay them locally, bridging the gap between production monitoring and local development.

Langfuse — The Open Source Alternative

Langfuse is the open source answer to LangSmith, with over 19,000 GitHub stars and an MIT license. It can be self-hosted via Docker in minutes, which matters enormously for teams that cannot send their prompts and responses to third-party cloud services.

The platform covers tracing with multi-turn conversation support, prompt versioning with a built-in playground, and flexible evaluation pipelines. Native SDKs exist for Python and JavaScript, plus connectors for LangChain, LlamaIndex, and dozens of other frameworks. For teams building custom agent systems outside the major frameworks, Langfuse's framework-agnostic approach is a significant advantage.

AgentOps — Purpose-Built for Agents

While LangSmith and Langfuse started from LLM observability and expanded toward agents, AgentOps was built specifically for agent workflows from day one. It tracks agent sessions as first-class objects, with built-in support for multi-agent scenarios, tool call analytics, and agent-specific metrics that go beyond simple LLM tracing.

What Actually Matters When Choosing

The decision comes down to three questions:

1. Framework lock-in tolerance: LangSmith and LangGraph Studio work best within the LangChain ecosystem. Langfuse and AgentOps are framework-agnostic. If you are building a custom agent runtime, the agnostic tools give you more flexibility.

2. Data sovereignty: Can your prompts and model responses leave your infrastructure? If not, Langfuse (self-hosted) is currently the only serious option. LangSmith is cloud-only. This is not a minor consideration when your agents process customer data, internal documents, or proprietary knowledge bases.

3. Development vs. production focus: LangGraph Studio is unmatched for development-time debugging. LangSmith and Langfuse are stronger for production monitoring. Most teams will eventually need both capabilities.

The Bigger Picture

Agent observability is not a luxury. As agents become more autonomous, more complex, and more integrated into business-critical workflows, running them without observability is like running a production web service without logging. You will survive until you don't.

The tooling is maturing fast. In 2024, most teams were flying blind. By early 2026, there are multiple production-ready options across open source and commercial offerings. The best time to instrument your agent system was when you built it. The second best time is now.